It’s often good security behaviour to require users to re-enter their password when they want to change some secure property of their account, like generate personal access tokens, or change their Multi-factor Authentication (MFA) settings.

You may have seen the Github ‘sudo’ mode, which asks you to re-enter your password when you try to change something sensitive.

Most of the time a user’s session is long-lived, so when they want to do something sensitive, it’s best to check they still are who they say.

I’ve been working on the implementation of IdentityServer4 at Enclave for the past week or so, and had this requirement to require password confirmation before users can modify their MFA settings.

I thought I’d write up how I did this for posterity, because it took a little figuring out.

The Layout

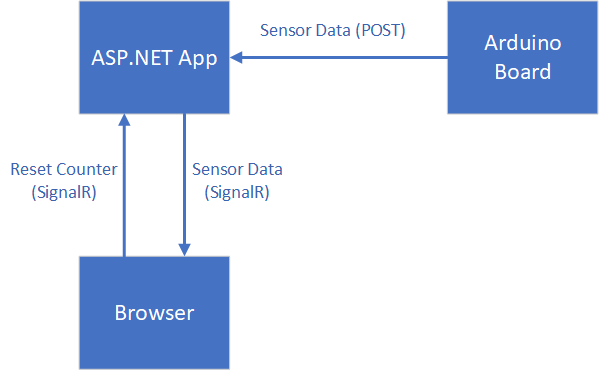

In our application, we have two components, both running on ASP.NET Core 3.1

- The Accounts app that holds all the user data; this is where Identity Server runs; we use ASP.NET Core Identity to do the actual user management.

- The Portal app that holds the UI. This is a straightforward MVC app right now, no JS or SPA to worry about.

To make changes to a user’s account settings, the Profile Controller in the Portal app makes API calls to the Accounts app.

All the API calls to the Accounts app are already secured using the Access Token from when the user logged in; we have an ASP.NET Core Policy in place for our additional API (as per the IdentityServer docs) to protect it.

The Goal

The desired outcome here is that specific sensitive API endpoints within the Accounts app require the calling user to have undergone a second verification, where they must have re-entered their password recently in order to use the API.

What we want to do is:

- Allow the Portal app to request a ‘step-up’ access token from the Accounts app.

- Limit the step-up access token to a short lifetime (say 15 minutes), with no refresh tokens.

- Call a sensitive API on the Accounts App, and have the Accounts App validate the step-up token.

Issuing the Step-Up Token

First up, we need to generate a suitable access token when asked. I’m going to add a new controller, StepUpApiController, in the Accounts app.

This controller is going to have a single endpoint, which requires a regular access token before you can call it.

We’re going to use the provided IdentityServerTools class, that we can inject into our controller, to do the actual token generation.

Without further ado, let’s look at the code for the controller:

[Route("api/stepup")]

[ApiController]

[Authorize(ApiScopePolicy.WriteUser)]

public class StepUpApiController : ControllerBase

{

private static readonly TimeSpan ValidPeriod = TimeSpan.FromMinutes(15);

private readonly UserManager<ApplicationUser> _userManager;

private readonly IdentityServerTools _idTools;

public StepUpApiController(UserManager<ApplicationUser> userManager,

IdentityServerTools idTools)

{

_userManager = userManager;

_idTools = idTools;

}

[HttpPost]

public async Task<StepUpApiResponse> StepUp(StepUpApiModel model)

{

var user = await _userManager.GetUserAsync(User);

// Verify the provided password.

if (await _userManager.CheckPasswordAsync(user, model.Password))

{

var clientId = User.FindFirstValue(JwtClaimTypes.ClientId);

var claims = new Claim[]

{

new Claim(JwtClaimTypes.Subject, User.FindFirstValue(JwtClaimTypes.Subject)),

};

// Create a token that:

// - Is associated to the User's client.

// - Is only valid for our configured period (15 minutes)

// - Has a single scope, indicating that the token can only be used for stepping up.

// - Has the same subject as the user.

var token = await _idTools.IssueClientJwtAsync(

clientId,

(int)ValidPeriod.TotalSeconds,

new[] { "account-stepup" },

additionalClaims: claims);

return new StepUpApiResponse { Token = token, ValidUntil = DateTime.UtcNow.Add(ValidPeriod) };

}

Response.StatusCode = StatusCodes.Status401Unauthorized;

return null;

}

}

A couple of important points here:

- In order to even access this API, the normal access token being passed in the requested must conform to our own

WriteUserscope policy, which requires a particular scope be in the access token to get to this API. - This generated access token is really basic; it has a single scope, “account-stepup”, and only a single additional claim containing the subject.

- We associate the step-up token to the same client ID as the normal access token, so only the requesting client can use that token.

- We explicitly state a relatively short lifetime on the token (15 minutes here).

Sending the Token

This is the easy bit; once you have the token, you can store it somewhere in the client, and send it in a subsequent request.

Before sending the step-up token, you’ll want to check the expiry on it, and if you need a new one, then prompt the user for their credentials and start the process again.

For any request to the sensitive API, we need to include both the normal access token from the user’s session, plus the new step-up token.

I set this up when I create the HttpClient:

private async Task<HttpClient> GetClient(string? stepUpToken = null)

{

var client = new HttpClient();

// Set the base address to the URL of our Accounts app.

client.BaseAddress = _accountUrl;

// Get the regular user access token in the session and add that as the normal

// Authorization Bearer token.

// _contextAccessor is an instance of IHttpContextAccessor.

var accessToken = await _contextAccessor.HttpContext.GetUserAccessTokenAsync();

client.DefaultRequestHeaders.Authorization = new AuthenticationHeaderValue("Bearer", accessToken);

if (stepUpToken is object)

{

// We have a step-up token; include it as an additional header (without the Bearer indicator).

client.DefaultRequestHeaders.Add("X-Authorization-StepUp", stepUpToken);

}

return client;

}

That X-Authorization-StepUp header is where we’re going to look when checking for the token in the Accounts app.

Validating the Step-Up Token

To validate a provided step-up token in the Accounts app, I’m going to define a custom ASP.NET Core Policy that requires the API call to provide a step-up token.

If there are terms in here that don’t seem immediately obvious, check out the docs on Policy-based authorization in ASP.NET Core. It’s a complex topic, but the docs do a pretty good job of breaking it down.

Let’s take a look at an API call endpoint that requires step-up:

[ApiController]

[Route("api/user")]

[Authorize(ApiScopePolicy.WriteUser)]

public class UserApiController : Controller

{

[HttpPost("totp-enable")]

[Authorize("require-stepup")]

public async Task<IActionResult> EnableTotp(TotpApiEnableModel model)

{

// ... do stuff ...

}

}

That Authorize attribute I placed on the action method specifies that we want to enforce a require-stepup policy on this action. Authorize attributes are additive, so a request to EnableTotp requires both our normal WriteUser policy and our step-up policy.

Defining our Policy

To define our require-stepup policy, lets jump over to our Startup class; specifically, in ConfigureServices, where we set up Authorization using the AddAuthorization method:

services.AddAuthorization(options =>

{

// Other policies omitted...

options.AddPolicy("require-stepup", policy =>

{

policy.AddAuthenticationSchemes("local-api-scheme");

policy.RequireAuthenticatedUser();

// Add a new requirement to the policy (for step-up).

policy.AddRequirements(new StepUpRequirement());

});

});

The ‘local-api-scheme’ is the built-in scheme provided by IdentityServer for protecting local API calls.

That requirement class, StepUpRequirement is just a simple marker class for indicating to the policy that we need step-up. It’s also how we wire up a handler to check that requirement:

public class StepUpRequirement : IAuthorizationRequirement

{

}

Defining our Authorization Handler

We now need an Authorization Handler that lets us check incoming requests meet our new step-up requirement.

So, let’s create one:

public class StepUpAuthorisationHandler : AuthorizationHandler<StepUpRequirement>

{

private const string StepUpTokenHeader = "X-Authorization-StepUp";

private readonly IHttpContextAccessor _httpContextAccessor;

private readonly ITokenValidator _tokenValidator;

public StepUpAuthorisationHandler(

IHttpContextAccessor httpContextAccessor,

ITokenValidator tokenValidator)

{

_httpContextAccessor = httpContextAccessor;

_tokenValidator = tokenValidator;

}

/// <summary>

/// Called by the framework when we need to check a request.

/// </summary>

protected override async Task HandleRequirementAsync(

AuthorizationHandlerContext context,

StepUpRequirement requirement)

{

// Only interested in authenticated users.

if (!context.User.IsAuthenticated())

{

return;

}

var httpContext = _httpContextAccessor.HttpContext;

// Look for our special request header.

if (httpContext.Request.Headers.TryGetValue(StepUpTokenHeader, out var stepUpHeader))

{

var headerValue = stepUpHeader.FirstOrDefault();

if (!string.IsNullOrEmpty(headerValue))

{

// Call our method to check the token.

var validated = await ValidateStepUp(context.User, headerValue);

// Token was valid, so succeed.

// We don't explicitly have to fail, because that is the default.

if (validated)

{

context.Succeed(requirement);

}

}

}

}

private async Task<bool> ValidateStepUp(ClaimsPrincipal user, string header)

{

// Use the normal token validator to check the access token is valid, and contains our

// special expected scope.

var validated = await _tokenValidator.ValidateAccessTokenAsync(header, "account-stepup");

if (validated.IsError)

{

// Bad token.

return false;

}

// Validate that the step-up token is for the same client as the access token.

var clientIdClaim = validated.Claims.FirstOrDefault(x => x.Type == JwtClaimTypes.ClientId);

if (clientIdClaim is null || clientIdClaim.Value != user.FindFirstValue(JwtClaimTypes.ClientId))

{

return false;

}

// Confirm a subject is supplied.

var subjectClaim = validated.Claims.FirstOrDefault(x => x.Type == JwtClaimTypes.Subject);

if (subjectClaim is null)

{

return false;

}

// Confirm that the subject of the stepup and the current user are the same.

return subjectClaim.Value == user.FindFirstValue(JwtClaimTypes.Subject);

}

}

Again, let’s take a look at the important bits of the class:

- The handler derives from

AuthorizationHandler<StepUpRequirement>, indicating to ASP.NET that we are a handler for our custom requirement. - We stop early if there is no authenticated user; that’s because the step-up token is only valid for a user who is already logged in.

- We inject and use IdentityServer’s

ITokenValidatorinterface to let us validate the token usingValidateAccessTokenAsync; we specify the scope we require. - We check that the client ID of the step-up token is the same as the regular access token used to authenticate with the API.

- We check that the subjects match (i.e. this step-up token is for the same user).

The final hurdle is to register our authorization handler in our Startup class:

services.AddSingleton<IAuthorizationHandler, StepUpAuthorisationHandler>();

Wrapping Up

There you go, we’ve now got a secondary access token being issued to indicate step-up has been done, and we’ve got a custom authorization handler to check our new token.